AI Platforms are in their infancy but are needed to ensure success of AI within a company. Deploying AI without support of a platform? Danger ahead. As companies increasingly make business decisions using AI algorithms, the problems that can ensue if the algorithms ‘go rogue' can damage your reputation as a company if your machine learning pipeline is broken.

First, what is a machine learning pipeline? It's the process where machine learning algorithms go from code to use. The problem companies leveraging AI can experience is that as the data changes, an algorithm no longer effectively predicts at an accurate enough ratio.

For companies making crucial business decisions based on insights derived from AI, if those decisions are wrong this can lead to wasted spend.

I recently saw a Data Science engineer from Facebook speak and learned some interesting things about their machine learning pipeline. In the past year, they have been able to hire 2x more engineers who have created 3x more models and leveraged 3x more computation time on those models through their proprietary machine learning deployment platform. Facebook uses machine learning for everything from ranking ads, to search, to new feeds, face recognition and text translation. In their process, they have machine learning models that can take weeks to train and AI is a part of their crucial decision making criteria. They are also focused heavily on how to reduce compute time, so they have even created ML models that predict which code changes and data sets may fail.

Facebook is one of the four leading companies in the world who use AI as part of their core business processes but the practices that they created can be leveraged as part of an AI platform. The ugly truth is that most AI implementations don't have a platform to support. The current state of AI development tools simply doesn't support it.

Current Practices

Here's how most companies are ‘doing AI':

- A developer downloads a set of data onto their machine. They scrape data from multiple sources to aggregate, cleanse, and normalize it. Problem #1: Are the results repeatable? Is the data accurate?

- They train a model and once it meets an accuracy threshold, they create a service and roll it out. Problem #2: Many data scientists create crappy code that isn't reusable and isn't documented.

- They test the algorithm in production with real data in a trial run and check on the results. Then they ‘set it and forget it.' Problem #3: Many data scientists don't create KPIs for accuracy metrics for their algorithms over the long term. As data changes, accuracy can change and many companies aren't monitoring it.

- They create a similar permutation of the model for other use cases and rinse and repeat with training and deployment. Problem #4: No code reuse. Many algos can be re-used to solve multiple problems if designed correctly.

- Once someone figures out the algo has ‘gone rogue' and isn't producing the accuracy needed, the data scientist performs steps 1-3 again and rolls out an update. The new algo is used in place of the old. Problem #5: You can't compare the before/after results between the old algo and new algo. What if the old algo was better? What if the new algo introduces new issues? There's generally no ‘versioning' of predictions.

Pros/Cons to Using a AI Platform

Companies such as Facebook use an custom-built AI platform in order to not only make it faster, easier and more cost-effective to create and re-use models, but it also makes their process more fool-proof.

Pros:

- An AI platform supports versioning of algorithms so you can compare results between different versions and ‘bake off' which one works best in production.

- An AI platform encourages code reuse. You can see if existing algorithms can be leveraged on different data instead of reinventing the wheel.

- An AI platform integrates directly to data sources, making it easy to reverse engineer what data was used to make a prediction. If you find bugs in your data, you can find which algorithms and business processes are affected.

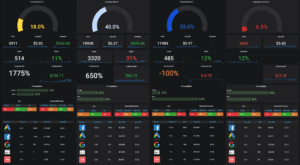

- AI platforms track KPIs such as accuracy, performance, and errors. This enables people to get alerted when problems happen.

- AI platforms support versioning. If the new algorithm performs worse, it's easy enough to roll-back or see if a bad training data set caused decreases in performance of an algorithm.

Cons:

- Many AI platforms are very expensive. Most are priced at the enterprise level.

- Many AI platforms have a tremendous learning curve. They can be the ‘kitchen sink' where every and any framework and programming language is supported where an engineer has to learn a dozen or more languages in order to leverage the platform correctly. They also require multiple servers and more processing to support a basic installation.

- Many AI platforms are not flexible. It's hard to enhance and create something that isn't already packaged in the box.

- For non-enterprise solutions, the documentation is atrocious. I'm a fan of step-by-step instructions and most companies and open source solutions don't do this in their documentation practices.

- Many platforms have a steep learning curve.

Atlas AI - Open Source Model Management

Atlas AI is an open source AI platform that enables users to:

- Quickly and easily add new algorithms for predictions

- Creates a framework for prediction versioning (which prediction was created by which algorithm and is it better/worse.)

- Aggregates customer information in a central customer data platform.

- Automate processes using AI.